Product Operating Model - The Intake

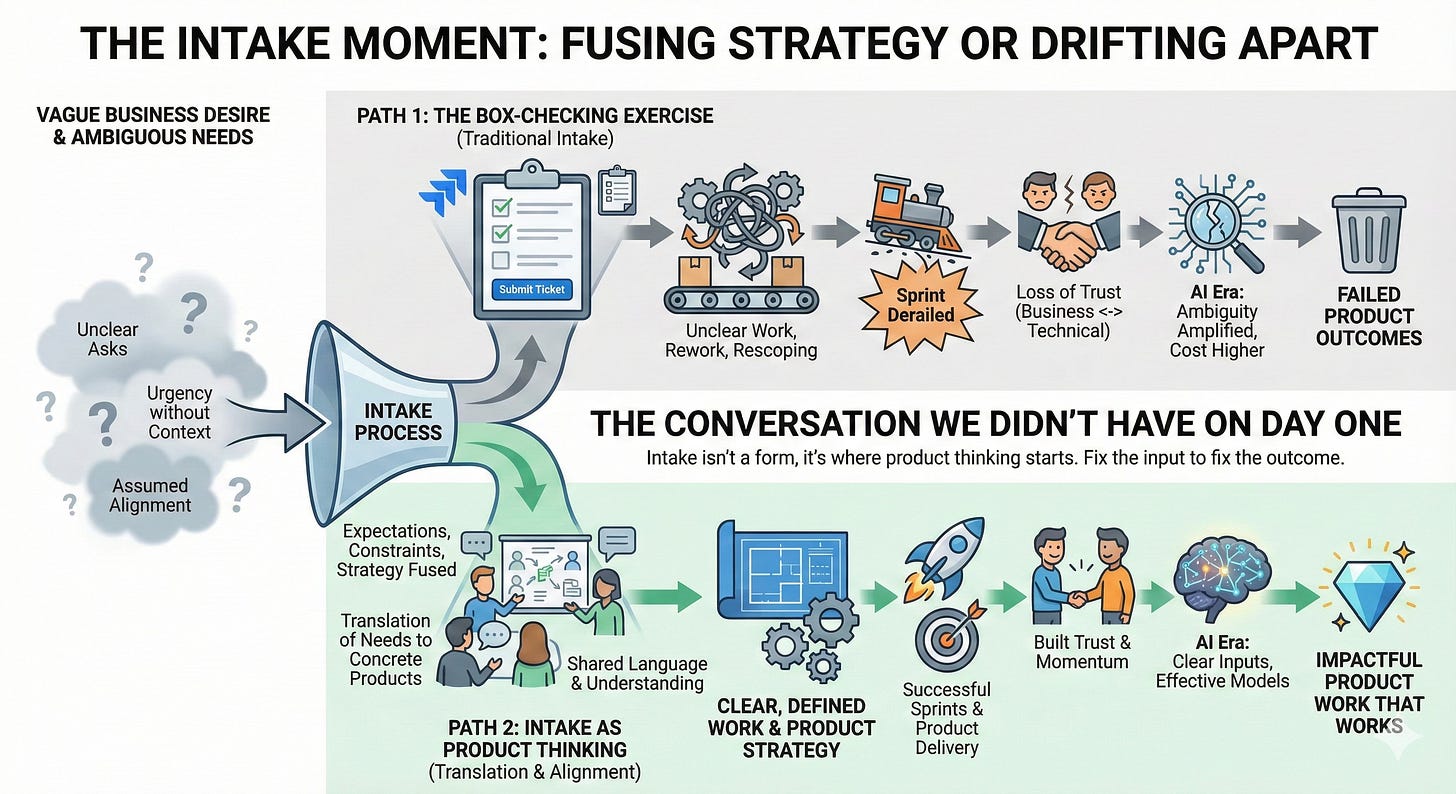

Intake is the unsexy, bureaucratic-sounding corner of product management that most people try to automate away with a Jira form. But it is exactly where expectations, constraints, urgency, and strategy either fuse together or drift apart. It is the moment where a vague business desire hits the reality of enterprise data. When I look at why a sprint derailed or why a stakeholder is frustrated three months down the line, the root cause is almost always traceable to a conversation we didn’t have on day one. This isn’t a process rant. I don’t care about rigid templates. I care about building products that actually work, and that is impossible if we don’t fix how work enters the system.

I’ve watched this pattern repeat across enterprise platforms, analytics teams, and AI product work. Unclear asks become unclear work, which becomes rework, rescoping, and eventually a loss of trust between business stakeholders and technical teams. It’s not about bad people or broken processes. It’s about the fundamental challenge of translating ambiguous needs into concrete products when everyone assumes they’re aligned until the moment they’re not. Intake is where product thinking should start—but often doesn’t. Instead, it becomes a box-checking exercise, a ticket in a queue, a handoff between groups that speak different languages. When intake breaks down, everything downstream compounds the problem. In the AI era, where ambiguity gets amplified through models and automation, the cost of unclear intake is even higher.

Thanks for reading! Subscribe for free to receive new posts and support my work.

Why Intake Is So Much Harder Than It Sounds

The core issue is simple but hard to solve: stakeholders ask for outputs, not problems. They want a dashboard, a report, a model, a chatbot. They rarely say what decision they’re trying to make, what action they want to take, or what will change if they get what they’re asking for. This isn’t because they’re lazy or thoughtless—it’s because outputs are easier to describe than problems. Problems require context, constraints, and clarity about what success looks like. Outputs are concrete, visual, relatable.

On the other side of the table, data and engineering teams are conditioned to respond with effort. We see a request, and our instinct is to estimate the points, assess the technical feasibility, and start building. We assume alignment because the request seems tangible. If someone asks for a churn model, we know how to build a churn model.

But this is where the illusion of alignment settles in. The stakeholder wants a churn model to justify a marketing spend; the data scientist is building it to understand user behavior features. The output is the same, but the success criteria are completely different. When you add AI to this mix, the confusion accelerates. “We need AI to search our contracts” sounds simple until you realize nobody agrees on what “search” actually means in a legal context. The friction isn’t malicious—it’s just that we are speaking different dialects of the same language.

By the time teams realize the ask wasn’t clear, they’ve already invested weeks into something that doesn’t quite fit the need. Alignment is an illusion until it’s tested.

The Real Cost of Unclear Intake

The cost isn’t measured in dollars—it’s measured in momentum and trust. Work gets rescoped midstream because the original ask didn’t capture the real need. Teams duplicate analysis because no one knew someone else already built something similar. Priorities shift without context because intake didn’t surface dependencies or tradeoffs. Roadmaps become reactive instead of strategic because every urgent request looks equally important at the front door.

AI prototypes look wrong because the request wasn’t defined. A model might be technically sound but feel off because no one clarified what good looks like, what constraints matter, or what edge cases break trust. The feedback loop becomes longer, more expensive, and more frustrating for everyone involved. Business teams think technical teams don’t understand their needs. Technical teams think business teams don’t know what they want. Both are right, and both are stuck.

The compounding effect is what kills momentum. One unclear intake leads to rework. Rework leads to missed deadlines. Missed deadlines lead to lost confidence. Lost confidence leads to more urgent requests, shorter timelines, and less time to clarify the next ask. The system speeds up and gets worse at the same time. Teams end up working harder on things that matter less because intake never forced the hard questions up front.

Intake as a Product Discipline (Not Project Admin)

Intake isn’t a ticketing system. It’s where product thinking starts. It’s the moment you decide whether to build something, what to build, and for whom. It’s where value, feasibility, and scope intersect before anyone commits to execution. Treating intake as administrative work—filling out forms, assigning priorities, routing requests—misses the entire point. Good intake is how you avoid building the wrong thing quickly.

This is where translation matters most. Business teams speak in outcomes, urgency, and impact. Engineering teams speak in systems, data models, and technical constraints. Data teams speak in transformations, quality, and governance. AI and ML teams speak in training data, feature engineering, and inference patterns. Intake is where these languages collide, and someone needs to translate. Not just repeat what each group said, but synthesize it into a shared understanding of what problem exists, what success looks like, and what tradeoffs are acceptable.

Great intake doesn’t produce better tickets. It produces better products. It aligns incentives across groups before work starts. It surfaces conflicts early when they’re cheap to resolve. It builds shared context so teams can move faster later. It creates space for feasibility checks before commitments are made. It turns requests into roadmaps and urgency into strategy. This is product work, not project management.

What Good Intake Actually Looks Like

Good intake starts with clear problem framing. Not what the stakeholder wants to build, but what problem they’re trying to solve. What decision is blocked? What action can’t be taken? What inefficiency is costing time, money, or trust? The framing forces specificity and exposes assumptions. A request for a dashboard becomes a question about how pricing decisions are made. A request for a model becomes a question about how recommendations should adapt to user behavior.

Next comes the desired outcome and success metrics—not vanity metrics or effort indicators, but concrete measures of whether the problem was solved. Did the decision get made faster? Did the action happen more often? Did the inefficiency decrease? These metrics ground the work in reality and create accountability for both the requester and the team building the solution.

Context matters more than most teams admit. Business context: why now, why this, why us? Data context: what exists, what’s missing, what transformations are needed, what gold-layer assets apply? Operational context: who owns this domain, who makes decisions, who will use this, and how does it fit into existing workflows? Without context, teams build in a vacuum and hope it works when it lands.

Domain ownership and decision-maker clarity prevent downstream confusion. Who is responsible for this area? Who has authority to say yes or no? Who will prioritize competing requests? If ownership is unclear, work stalls when tradeoffs emerge. If decision-making is ambiguous, teams iterate indefinitely without resolution. Boundaries define what this is not—what problems are out of scope, what use cases won’t be supported, what edge cases are acceptable risks. Boundaries create focus and prevent scope creep while setting expectations so stakeholders know what they’re getting and what they’re not.

Early feasibility checks happen before commitments are made. Does the data exist? Is it accessible? What transformations are required? Are there gold-layer assets that already solve part of this? Can the team realistically deliver in the timeframe? Feasibility checks don’t kill projects—they prevent teams from starting work they can’t finish.

For AI work, intake needs additional clarity. What is the intent behind the model or agent? What action should it take or enable? What constraints or guardrails matter for safety, bias, or compliance? Who has access to the output or decision? What signals indicate the system is working or failing? AI without this clarity becomes a black box that stakeholders don’t trust and teams can’t debug.

When I think about scaling platform adoption from one studio group to five, good intake was the difference. It wasn’t about building faster—it was about building the right things for the right reasons with the right expectations. Intake forced alignment before work started, which meant less rework, more momentum, and stronger relationships with stakeholders.

AI Raises the Stakes

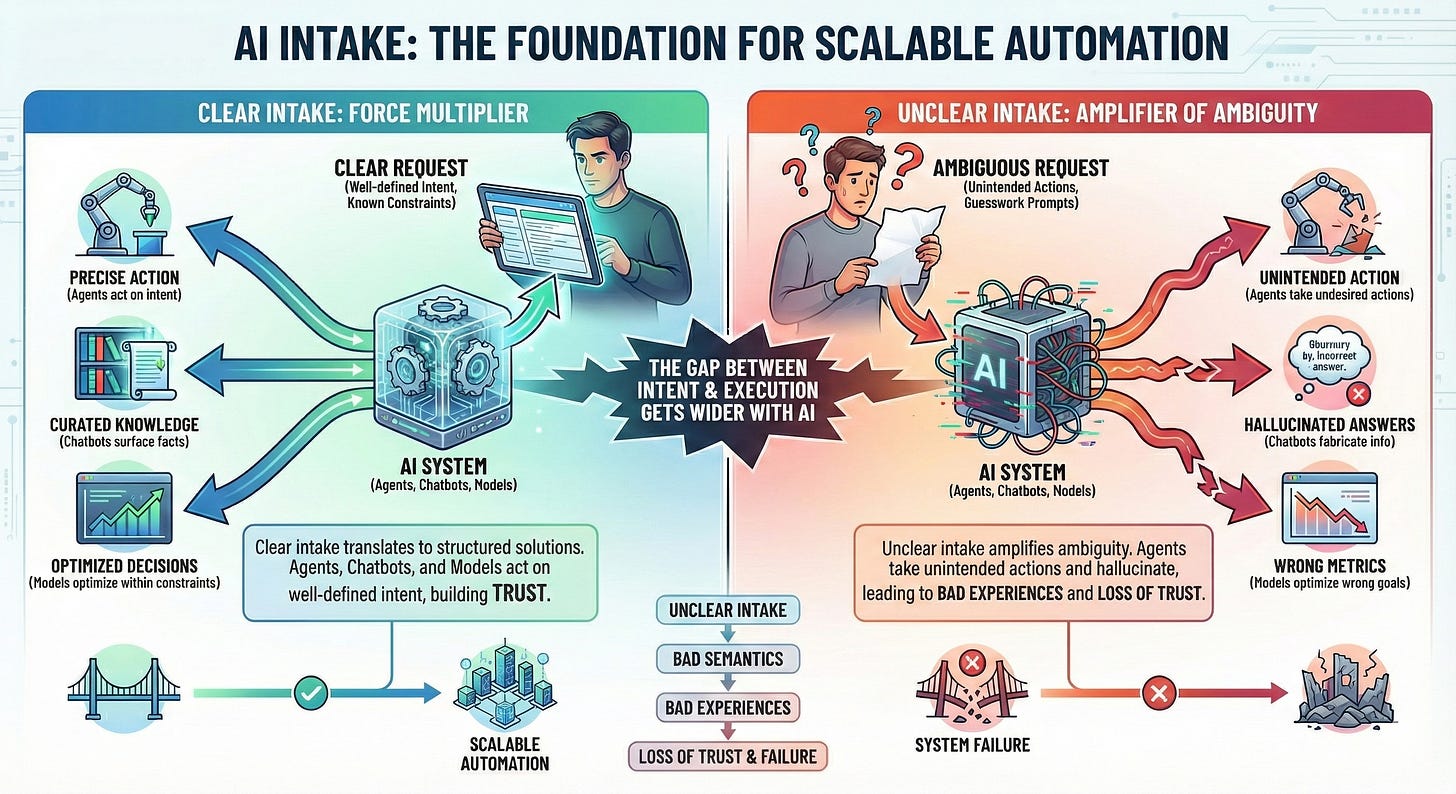

AI amplifies everything about intake—the good and the bad. When intake is clear, AI becomes a force multiplier. Agents can act on well-defined intent. Chatbots can surface curated knowledge. Models can optimize decisions within known constraints. The structure of the request translates directly into the structure of the solution.

When intake is unclear, AI becomes an amplifier of ambiguity. Agents take actions no one intended. Chatbots hallucinate answers because the knowledge base wasn’t scoped. Models optimize the wrong metrics because success wasn’t defined. Prompts become guesswork. Outputs feel wrong but teams can’t articulate why. Trust erodes faster than it builds

The gap between intent and execution gets wider with AI. A human can interpret an ambiguous request and course-correct in real time. An AI system can’t—it does exactly what it was told, which might not be what anyone meant. Unclear intake leads to bad semantics, bad semantics lead to bad experiences, and bad experiences lead to loss of trust. Once stakeholders stop trusting the AI product, it doesn’t matter how good the underlying model is.

Good intake becomes the foundation for scalable automation. It defines what the system should do, what it shouldn’t do, and how to know the difference. It creates boundaries so agents can act autonomously without constant oversight. It aligns expectations so stakeholders understand what AI can and can’t deliver. Intake is no longer a nice-to-have—it’s a prerequisite for AI products that work.

How Intake Shapes Product Growth and Team Maturity

At scale, intake becomes the backbone of everything else. When every request goes through structured intake, teams can compare asks, identify patterns, and make strategic tradeoffs. It shapes prioritization. It informs pod-level roadmaps. It enables better allocation and sequencing. It gives stakeholders confidence that we’re building the right things in the right order. The conversation shifts from why isn’t this done yet to what should we focus on next.

This creates a culture of clarity—where teams think before they execute. Teams learn to ask better questions. Stakeholders learn to frame problems instead of prescribing solutions. Everyone gets better at translation—understanding what different groups need and how to bridge the gaps. This compounding clarity makes future work faster and more effective.

Intake is the shift from reactive execution to intentional product work. It’s the difference between working a queue and leading a portfolio. A team that treats intake as administrative work will always feel behind, always feel reactive, always feel like they’re building the wrong things. A team that treats intake as product discipline will build momentum, trust, and strategic impact over time.

This is also where teams grow. Intake isn’t a junior skill. It’s a strategic one. It’s where product managers learn to think like translators—connecting business motivations, technical realities, and data foundations in a way that moves work forward instead of creating more noise. Leading thirteen people across eight pods taught me that intake is where teams learn to think, not just execute. It’s where junior team members see how product strategy gets translated into concrete work. It’s where cross-functional collaboration gets practiced before the pressure of delivery. It’s where the translation skill becomes visible and teachable. Good intake doesn’t just produce better products—it produces better teams.

A Simple Reflection

Intake won’t be the flashy part of the work. No one writes case studies about how they improved request clarity. No one gets promoted for building better problem framing. It’s invisible when it works and obvious when it doesn’t.

But it’s the difference between teams that grow and teams that stall. Between roadmaps that reflect strategy and roadmaps that reflect whoever shouted loudest. Between products that solve real problems and products that chase ambiguous outputs.

Want to improve product outcomes? Improve how work enters the system. Clear inputs create compounding product value. Everything else follows from there.

Thanks for reading! Subscribe for free to receive new posts and support my work.