Product Alchemist

We have reached a breaking point in the discipline of product management.

For the last fifteen years, the tech industry has heralded the “Generalist PM”—the agile utility player who could parachute into any problem space and find a solution. The theory was seductive: if you mastered the universal frameworks of discovery, prioritization, and stakeholder management, the specific domain knowledge was secondary. A good Generalist could manage a B2C mobile app on Monday, pivot to a B2B SaaS dashboard on Tuesday, and oversee a logistics platform on Wednesday (hypothetically speaking here).

In the era of Generative AI and Large Language Models (LLMs), that theory is dead.

As I look across the landscape of enterprise tech—and drawing from my own years of experience leading complex data platforms and scaling AI products—I see the “Generalist” model collapsing under the weight of stochastic systems. The toolkit that built the Web 2.0 era—user stories, deterministic acceptance criteria, and linear roadmaps—is insufficient for the probabilistic reality of AI.

You cannot manage an LLM-based product the same way you manage a deterministic web form. When the output of your product varies every time a user interacts with it, “acceptance criteria” becomes a philosophical debate rather than a checkbox.

We don’t need more ticket-movers. We don’t need more Scrum masters disguised as Product Managers. We need Product Alchemists.

We need a new breed of leader who doesn’t just “manage” the roadmap but actively transmutes raw technical capabilities—data assets, model weights, and inference strategies—into gold-standard market value. Here is why the old blueprint is obsolete, and what the new one looks like.

The Shift: From “Cool Demo” to Strategic Imperative

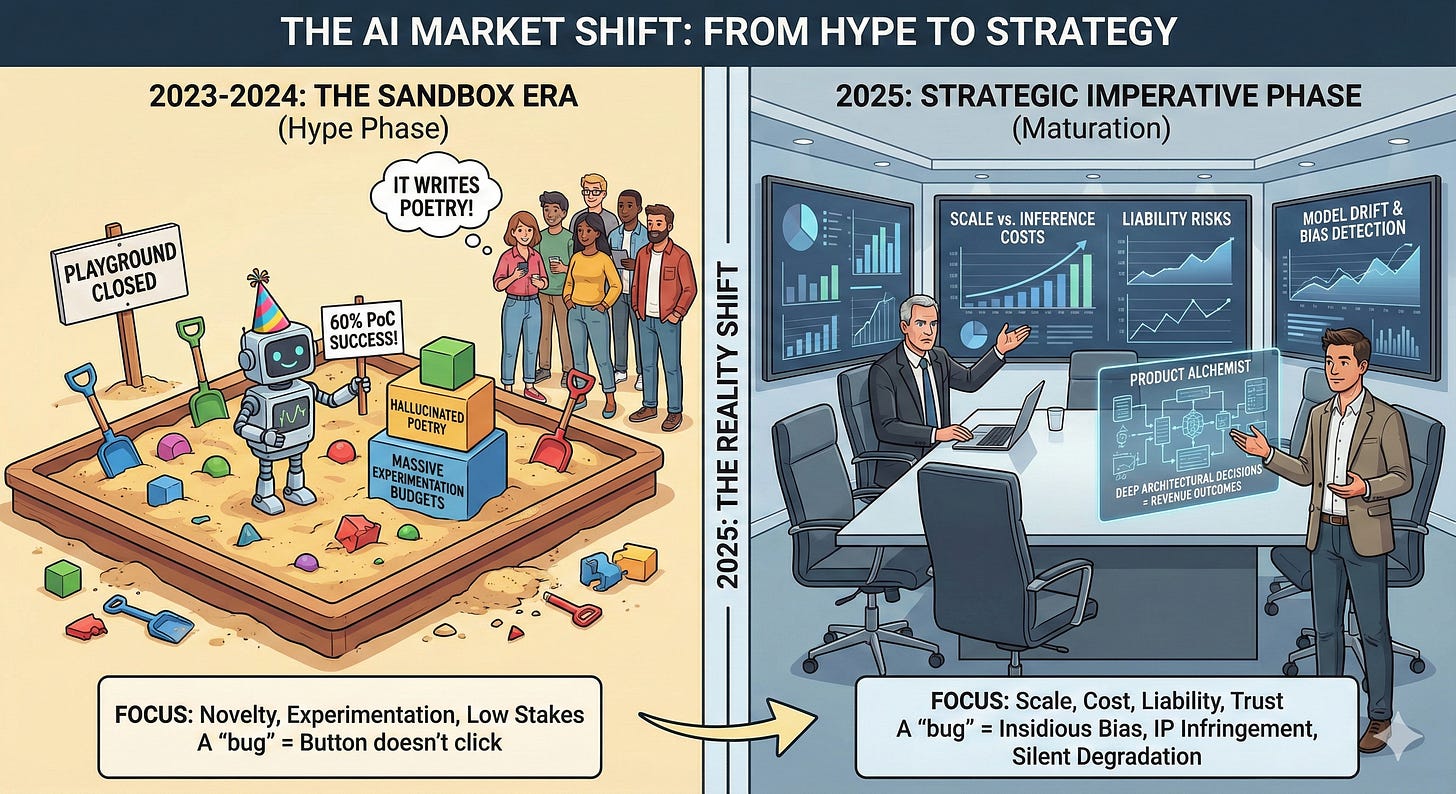

To understand the urgency of this shift, we have to look at the rapid maturation of the market.

In 2023 and early 2024, we were collectively living in the “Sandbox Era.” Companies were throwing massive budgets at AI experimentation. If a PM delivered a Proof of Concept (PoC) that worked 60% of the time, it was celebrated as a victory. The novelty of the technology masked its flaws. “It writes poetry!” was enough to secure funding, even if the poetry was hallucinated.

Welcome to the reality of 2025. The playground is closed.

We have moved from the Hype Phase to the Strategic Imperative Phase. CFOs and Boards are no longer asking, “Can we build this?” They are asking, “Can this scale to millions of users without exploding our inference costs?” and “What is the liability if this model gives bad financial advice?”

The stakes have changed dramatically. In the traditional software world, a “bug” meant a button didn’t click or a page didn’t load. In the AI world, a “bug” is far more insidious. It can mean subtle algorithmic bias, IP infringement, or a silent degradation of model quality (drift) that erodes user trust over weeks before anyone notices.

A Generalist PM looks at these problems and calls Engineering, assuming it’s a code fix. A Product Alchemist anticipates these problems and builds the entire product strategy around them. They understand that in this new era, the model is the product, and revenue outcomes are inextricably linked to deep architectural decisions.

The Core Problem: The “Upskilling” Fallacy

The common industry response to this talent gap has been “upskilling.” Organizations are sending their Generalist PMs to two-day workshops on “AI for Business Leaders” or buying them a Coursera subscription, hoping they figure it out.

This is a dangerous fallacy. It assumes the gap is merely vocabulary. The gap is actually mindset.

The Generalist PM is trained to prioritize velocity and feature release. They treat the underlying technology as a “black box”—inputs go in, magic happens, outputs come out. They focus on the UI/UX wrapper around the box. But in an AI-led Product-Led Growth (PLG) environment, you cannot treat the tech as a black box. The magic inside the box is the only thing that matters.

If you don’t understand Context Windows, you cannot price your product correctly or understand why users are frustrated when the bot “forgets” earlier instructions.

If you don’t understand RAG (Retrieval-Augmented Generation) vs. Fine-tuning, you cannot promise the right level of personalization or data freshness to your customers.

If you don’t understand Probabilistic Outcomes, you will promise a roadmap you cannot deliver, because you are trying to force a non-deterministic square peg into a deterministic round hole.

I see this friction constantly. When a Generalist PM tries to apply standard Agile velocity metrics to a Data Science team, the process breaks. You cannot “point” research. You cannot “sprint” your way to better model accuracy. The Product Alchemist knows that managing AI is less about managing an assembly line and more about managing a research laboratory that needs to ship.

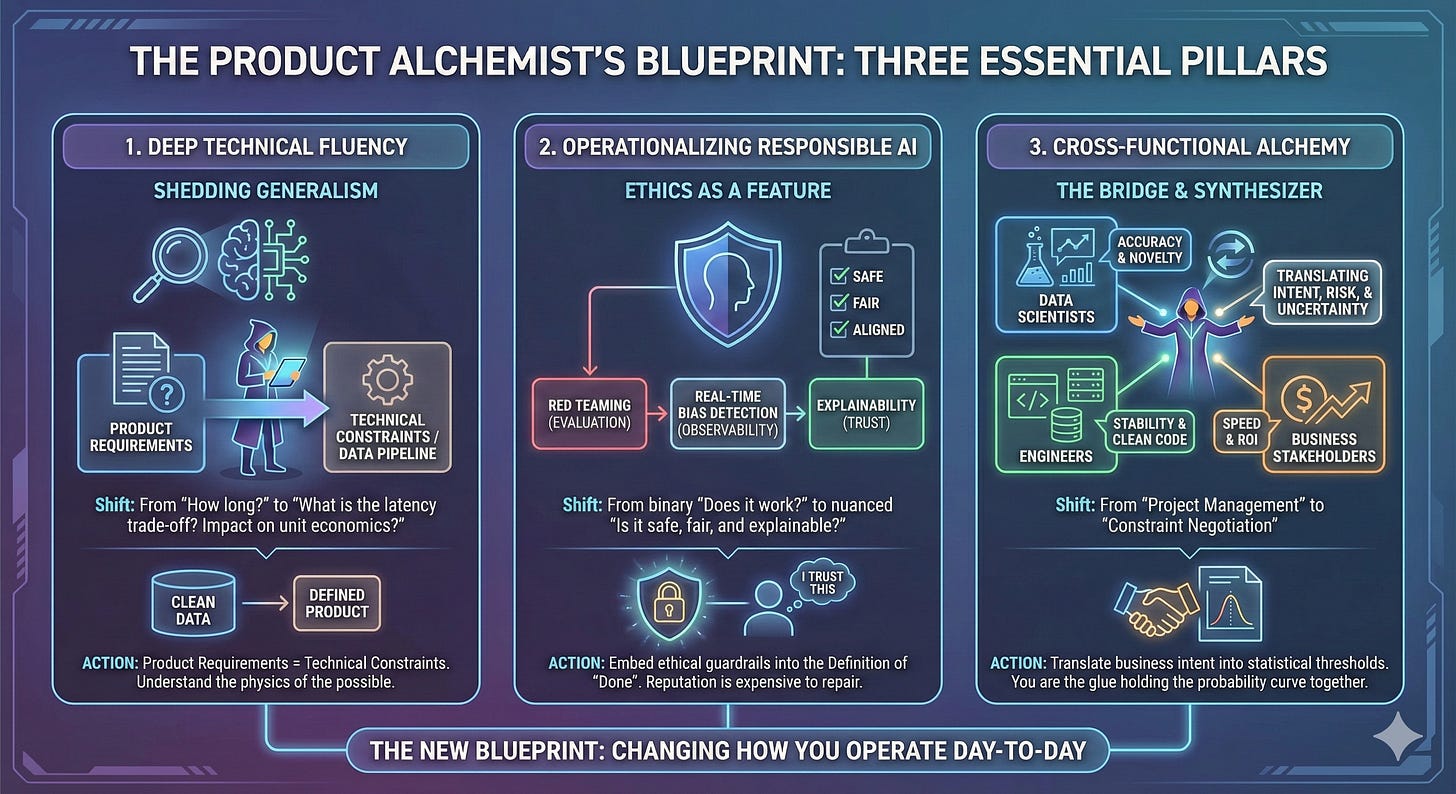

The Blueprint: 3 Pillars of the Product Alchemist

To survive this shift, we need a fundamentally new blueprint for the role. This isn’t just about adding “AI” to your LinkedIn headline; it’s about changing how you operate day-to-day. Here are the three pillars of the Product Alchemist.

1. Shedding Generalism for Deep Technical Fluency

The Alchemist stops hiding behind the phrase “I’m non-technical.” In a PLG world driven by AI, technical fluency is the primary lever for growth.

You do not need to code the neural network, but you must understand the physics of the possible. You need to know enough to push back on engineers and enough to inspire data scientists.

The Shift: Instead of asking, “How long will this take?”, the Alchemist asks, “What is the latency trade-off if we switch from GPT-4 to a smaller, fine-tuned Llama model? How does that impact our unit economics?”

The Action: Stop separating “Product Requirements” from “Technical Constraints.” They are now the same document. Your ability to define the product depends entirely on your understanding of the data pipeline. If the data isn’t clean, the product doesn’t exist.

2. Operationalizing “Responsible AI” (Ethics as a Feature)

In the old world, “Trust & Safety” was often a post-launch support ticket, a legal review, or a moderation queue. For the Alchemist, Responsible AI is a core feature set, not a constraint to be annoyed by.

Leading platform work at an enterprise scale teaches you one thing quickly: Reputation is expensive to repair. If your AI agent hallucinates a discount that doesn’t exist, or outputs toxic content to a minor, the PR damage is instantaneous. The Alchemist integrates ethical guardrails into the Definition of “Done.”

The Shift: Moving from a binary “Does it work?” to a nuanced “Is it safe, fair, explainable, and aligned?”

The Action: Embed evaluation frameworks directly into the product lifecycle. This means budgeting time for “Red Teaming” (trying to break the model) and building observability tools that detect bias in real-time. If the model is opaque, the user won’t trust it. If they don’t trust it, they won’t adopt it, and your PLG flywheel stops spinning.

3. Cross-Functional Alchemy (The Bridge)

This is the hardest part of the job. In my experience managing diverse pods, I constantly mediate a clash of three distinct cultures:

Data Scientists: Value accuracy, novelty, and academic rigor. They want to tweak the model forever to get that extra 0.5% precision.

Engineers: Value stability, uptime, and clean code. They hate the messiness of Jupyter notebooks and non-deterministic outputs.

Business Stakeholders: Value speed, ROI, and certainty. They want to know exactly what the AI will say before it says it (which is impossible).

Left alone, these groups talk past each other. The Generalist PM acts as a messenger, passing notes between these silos, often getting shot in the process.

The Alchemist acts as a Translator and Synthesizer.

The Shift: Moving from “Project Management” to “Constraint Negotiation.”

The Action: You must translate business intent into statistical thresholds for the Data Scientists (e.g., “We need 95% accuracy on this specific intent, but we can tolerate 80% on this other one”). Conversely, you must translate “model drift” into financial risk for the stakeholders. You are the glue holding the probability curve together. You are the one who has to look the stakeholder in the eye and explain why the AI gave a different answer today than it did yesterday—and why that’s actually a good thing.

Conclusion: The Call to Action

The era of the “passable” Product Manager is over. The divide is widening.

On one side, we have the ticket-movers, who will increasingly find their roles automated by the very AI they are trying to build. On the other side, we have the Alchemists—the strategic leaders who can wield this chaotic, powerful technology to create genuine business value.

As we look toward the scaling challenges of 2026 and beyond, the market will mercilessly filter out those who cannot bridge the gap between business strategy and AI reality.

To the CPOs and hiring managers reading this: Stop hiring generic PMs and hoping they “catch up” to AI. It’s not fair to them, and it’s dangerous for your product. Look for the Alchemists. Look for the people who are curious about the data, who respect the complexity of the science, and who have the courage to own the outcome, not just the output.

To the aspiring AI PMs: Your new blueprint isn’t about learning Jira shortcuts or mastering a roadmap tool. It’s about deep-diving into the technology so you can lead responsibly. Don’t just build the product; understand the alchemy that makes it work.

The gold is there. But only for those who know how to transmute it.

Thanks for reading! Subscribe for free to receive new posts and support my work.